As 10GbE storage connectivity becomes more popular, the number of 10GbE connections you can get on a blade server becomes a consideration. In this blog post, I’ll review the offerings each blade vendor has to help you easily decide which works best for your project.

In the good ole days (of just a few years ago) I used to recommend a minimum of 8 x 1Gb Ethernet ports on blade servers for virtual environments. This was, by no means, the perfect answer, but it did allow for a pair of NICs for vSphere management, a pair for vMotion, a pair for VMs and a pair for other network needs like backups. Today, however, 10Gb Ethernet is more prevalent in many datacenters so the old way of sizing blade servers does not really work anymore. A pair of 10GbE NICs should be sufficient for many workloads, but as more companies become confident loading up virtualization hosts with VMs, two 10GbE ports simply isn’t enough – in fact many blade server vendors, like Cisco and Dell, are offering 4 x 10GbE ports standard on their blade servers so I thought it would be fun to look at Cisco, Dell, HP and and IBM’s blade server offerings to see the maximum numbers of 10GbE ports that could be achieved. [In the spirit of full disclosure, I work for Dell, so any discrepancies you find below are not to drive business to Dell, but simply my inability to understand the truth. Feel free to correct any mistakes in the comments below.]

Cisco

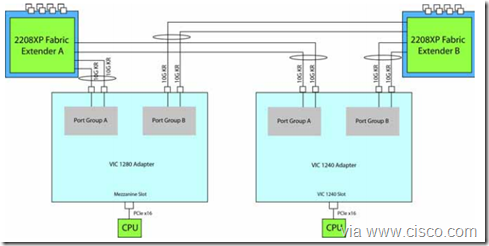

For this exercise, I decided to look only at the 2 socket blade server models that use the Intel E5-2600 CPU. For Cisco, this is the Cisco UCS B200 M3. The B200 M3 requires the VIC1240 NIC, which is a 4 x 10GbE NIC. When you add the VIC1280, you add an additional 4 x 10GbE so that provides you with a maximum of 8 x 10GbE ports – 4 going to Fabric Extender (FEX) A and 4 going to FEX B.

Dell

The Dell equivalent to the UCS B200 M3 would be the Dell PowerEdge M620 blade server. When this server was originally released, it was only offered with a 2 x 10GbE Network Daughter Card (NDC) however in June, Dell announced the 4 x 10GbE Broadcom NDC card that can be used on this model. The M620 blade server has two mezzanine expansion slots which can each hold a 2 x 10GbE card, therefore the Dell PowerEdge M620 has a maximum of 8 x 10GbE ports.

HP

The HP ProLiant BL460c Gen 8 is a bit of a different story from Cisco and Dell. The BL460c only offers a 2 x 10GbE card on the LOM. It also has 2 mezzanine card slots each capable of holding a 2 x 10GbE NIC. This puts the HP ProLiant BL460c Gen 8 with a maximum of 6 x 10 GbE ports.

IBM

Looking at the IBM BladeCenter HS23, we see that you can get 2 x 1GbE on the motherboard. In addition, you can add a 2 x 1GbE CIOv daughter card and a 4 x 10GbE CFFh daughter card which brings the total 10GbE ports for the HS23 to a maximum of 4 x 10GbE ports (in addition to 4 x 1GbE ports.)

The newest IBM blade offering, the Flex System x240 Compute Node is a bit different than the HS23 cousin. The Flex System x240 appears to come base with a 2 x 10GbE NIC and includes 2 x expansion card slots which can each hold a 2 x 10GbE NIC. That brings the Flex System x240 compute node to a maximum of 6 x 10GbE ports. HOWEVER, IBM also offers a 40GbE card for this system, which puts the "true, theoretical” maximum to 2 x 10GbE and 4 x 40GbE. To my knowledge, no one else offers a 40GbE NIC for blade servers at this time, so if sheer Ethernet bandwidth is what you are looking for, IBM’s Flex System x240 appears to win the battle.

Summary

In summary, each vendor has a solid selection of 10GbE offerings. In all reality, for virtualization, you probably won’t need more than 4 x 10GbE ports, but now you know what the current market offers from the top blade server vendors. Let me know your thoughts in the comments below.

Kevin Houston is the founder and Editor-in-Chief of BladesMadeSimple.com. He has over 15 years of experience in the x86 server marketplace. Since 1997 Kevin has worked at several resellers in the Atlanta area, and has a vast array of competitive x86 server knowledge and certifications as well as an in-depth understanding of VMware and Citrix virtualization. Kevin works for Dell as a Server Sales Engineer covering the Global Enterprise market.

Disclaimer: The views presented in this blog are personal views and may or may not reflect any of the contributors’ employer’s positions. Furthermore, the content is not reviewed, approved or published by any employer.

Just a bit of clarification on the IBM x240 server; You are correct that there are models that include a 2-port 10Gb Ethernet LAN-on-Motherboard option, but, if you choose to install a mezzanine adapter in mezz. slot 1, the LOM is disconnected from the chassis. Fortunately, IBM offers both 2-port 10Gb Ethernet mezz. adapters as well as 4-port mezz. adapters.

Currently, the maximum number of 10Gb Ethernet ports on an x86 mezz card is 4. (Power nodes offer an 8-port Converged Ethernet mezz). It is possible to install 2 Ethernet mezz adapters, giving you a total of 8 10Gb ethernet ports on an x240 (or x220) compute node.

Pingback: The Internet Fishing Trawler: Looks Like Server Hardware Stuff Edition | Bede Carroll

Exactly correct on the Flex System. One more thing. In order to use all 4 ports of those 4 port mezz nics, you need to purchase the software upgrade for the switches in the chassis. That software upgrade license costs as much as the switch. So that greatly increases the costs. If you don’t purchase the software upgrade for the switches, you will only be able to use the first 2 ports of each mezz card.

Currently, The x240M5 blade with the CN4058S card supports upto 8x10Gbps ports per PCI slot for a total of 16x10Gbps ports = 160Gbps per 2 socket blade slot. As currently, the FlexSystem scales only supports upto 6 ports per slot (EN4093R can scale upto 3 switches), not 8 – the total support is still 2 cards of 6x10Gbps = 120Gbps per slot.

FlexSystem also support an 8 socket blade using x880 E7v3 processors – and hence maximum bandwidth per single blade (as it is 4 blades of x880 joined together) results in 4x2x120Gbps = 960Gbps per blade slot.